I don’t know about you, but whenever I’m taking my iPhone out for a run (which happens four times a year) I’m more inclined to scroll through songs than actually, you know, move my legs. I know playlists are an option, but I’m really more of a “as the mood strikes” kind of guy, which probably explains why I can only listen to 30 seconds of any given mp3.

What’s also problematic is that in order to avoid trees and wandering little children, I have to actually slow down and look at my phone to navigate it, which effectively turns my quad-annual runs into leisurely stroll-jogs.

(MORE: Report: iPhone 5 Won’t Be a Weak Upgrade, Might Hit in August)

Apple understands this isn’t ideal, which is why in 2009, it filed a patent for a directional audio interface that would let people use their products without having to divert their eyes, such as when they’re driving. The patent was made public earlier this week and could be actually implemented in Apple devices somewhere down the line.

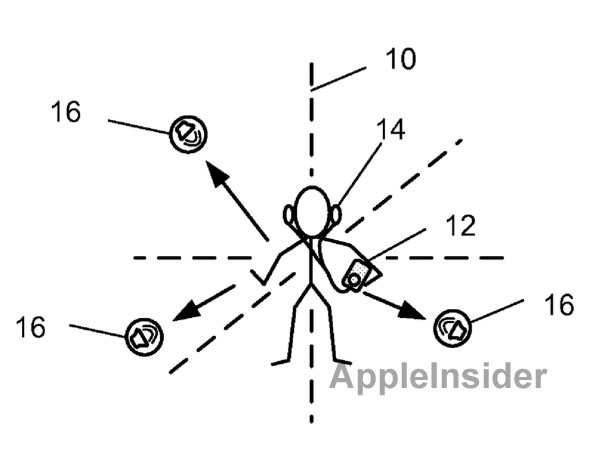

Utilizing “audible control nodes,” it would work as an “alternative user interface” after multitouch. Apple Insider has a great walkthrough for how the technology works, but it essentially goes like this:

Audible clues are delivered to the user through either their headphones or in their car. These clues can be presented as either spatially distinct noises, synthesized speech, or snippets of a song, and sound as if they’re coming at the user from different directions.

These different audible clues represent different items on the traditional navigation menu. If a user wants to select something like another song, they simply need to move their device in the direction of the sound. Think of it like a Kinect controller but using only audio.

(MORE: Gesture-Based ‘Gmail Motion’ April Fools’ Day Prank Gets Real)

Mind you, the technology is still only in its patent phases, but it’d make for an interesting feature and could help to minimize distractions while you’re doing more important things like keeping your eyes on the road.

It probably won’t save my yet-to-be established running career, but I’d like to at least think it might.

(via Apple Insider)