The Federal Trade Commission (FTC) released a sobering report on Monday about the state of app privacy. And not just any apps — specifically mobile ones used by kids. The report, titled “Mobile Apps for Kids: Disclosures Still Not Making the Grade,” looked at Google Play and Apple‘s App Store and found that a majority of vendors don’t divulge information to parents about what they’re collecting from kids that use these apps, how they’re sharing this data and who has access to it.

A “majority”? Try 80%. The FTC found that only 20% of the apps tested disclosed “any information” at all about their privacy mechanics.

Even more unsettling, the FTC found that 60% of the apps tested transmit information to someone else — occasionally the app developer, but more often “to an advertising network, analytics company, or other third party.” The FTC theorizes this could mean third parties are building detailed behavioral profiles of children. (The only thing off about this theory, in my view, is the FTC’s tentative use of the word “could.”)

See the pattern here? Stop me if any of this surprises you: The FTC found 58% of the apps it tested contain advertisements, and that only 15% revealed the present of advertising on their download page. Twenty-two percent of apps tested included links to social networking services, only 9% mentioned that prior to downloading and fully 17% allowed kids to purchase virtual goods (prices ranged from $1 to $30), while providing few or simply unclear guidelines about how these in-app purchases worked.

Summary: It’s the wild west in app-land when it comes to privacy metrics, especially if you’re a kid, and most app-makers don’t offer even minimal guidelines for decision-makers like parents.

It’s not the first time the FTC’s released such a report, which makes it doubly troubling. In February 2012, the FTC released its first look at privacy in children’s apps, a staff report titled “Mobile Apps for Kids: Current Privacy Disclosures Are Disappointing.” The report concluded that “neither the app stores nor the app developers provide the information parents need to determine what data is being collected from their children, how it is being shared, or who will have access to it.”

Here’s why this isn’t just a problem, it’s one that’s galloping away from us: According to the new FTC report, Apple’s App Store, which currently has over 700,00 apps, has seen an app increase of 40% in the space of just nine months (though September 2012). Google Play’s growth has been even more explosive — also at over 700,000 apps, a growth of 80% over roughly the same period. Companies like Apple and Google, as well as most of us in the tech coverage biz, are quick to celebrate those numbers as epic feats, while ignoring the corollary: Explosive growth means proliferation of all the shortcomings, too.

This isn’t an issue we disagree about as a country, by the way. According to a study by the Berkeley Center for Law & Technology, 78% of U.S. consumers surveyed considered the information on their mobile devices to be “at least as private as information stored on their home computers,” and an overwhelming 92% said they’d either “definitely” or “probably” not allow their location information to be used for targeted advertising. That’s from a survey of 1,200 adults. (Imagine what the response might have been had the questions in this study included language about children.)

Before I explain why the FTC report isn’t enough, I want to take a moment to acknowledge that this is the Federal Trade Commission we’re talking about — an independent agency of the U.S. federal government — doing what it was created to do back in 1914: “To prevent business practices that are anticompetitive or deceptive or unfair to consumers; to enhance informed consumer choice and public understanding of the competitive process; and to accomplish this without unduly burdening legitimate business activity.” In other words: a good example of the federal government doing careful, important research and coming away with information we can use, right now.

But it’s not enough. In fact it’s a little discouraging to think that the original FTC report arrived in February and, nearly a year on, the situation’s actually worsened (factoring marketplace growth and the sharp rise in mobile app use).

Should we depend on the FTC to sound these kinds of alarms in the first place? Speaking as a new parent of a child who’ll at some point be immersed in smartphones and tablets and a veritable cosmology of apps, it’s not enough to see a report like this once or twice a year, shake our fists, then forget about it until our kids are “inexplicably” being solicited by cosmetics or toy or clothing (or whatever) companies — not when the growth rate of this market’s completely off the scale.

The immediate solution, and I’m really speaking to fellow parents here, is to fully engage with our kids on an app-by-app basis, locking down devices so they can’t be installed willy-nilly, censoring location services where appropriate, controlling which apps can or can’t access photos, scrutinizing every app before downloading it and avoiding apps outright that don’t divulge their privacy practices upfront, and providing feedback to vendors who do lay all their cards on the table when the privacy behavior seems disagreeable.

What else can we do? The FTC’s recommendations in its report aren’t enough (they’re only recommendations, after all), but they’re a start.

“Incorporating privacy protections into the design of mobile products and services” probably needs to be reworded. Mobile products and services already offer privacy protections — they just need significantly better and more transparent ones.

But yes, I think parents would definitely appreciate “easy-to-understand choices about the data collection and sharing through kids’ apps,” as well as “greater transparency about how data is collected, used, and shared.” Apple and Google have gone to basic lengths to include privacy collation in iOS and Android devices, but they’ve stopped short of requiring that app-makers divulge all of the things the FTC’s talking about above, pre- or post-download. When I bring up “Privacy” on my iPhone, for instance, I can fiddle Location Services, contact sharing, calendar sharing and a handful of others, but there’s nothing about which apps are collecting usage data, how often that data’s collected or what’s being collected, specifically.

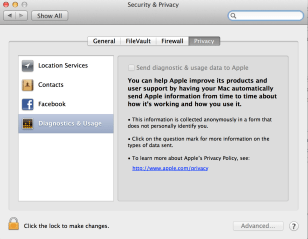

What we really deserve, though, is an all-in-one switch, similar to the one Apple offers under OS X’s privacy settings, where you have a single checkbox to “Send diagnostic & usage data to Apple” (see pic at right). I think parents could get behind — if not a blanket “on/off” switch at the OS level that locks everything up, then at least an app-level option to do so, for every app, as a prerequisite to being on Google or Apple’s respective app stores.

In any event, the FTC plans to release further reports (“We’ll do another survey in the future and we will expect to see improvement,” says FTC Chairman Jon Leibowitz) and it’s also launching an investigation into who might be violating the Children’s Online Privacy Protection Act or “engaging in unfair or deceptive practices in violation of the Federal Trade Commission Act.” The investigation is non-public, so it’s unclear whether the FTC will eventually name names. I hope they do. We deserve to know that, too.