A natty Steve Jobs poses with a room full of Macs in 1984.

On January 24, 1984, at the Flint Center on De Anza College’s campus in Cupertino, California, Apple formally announced the Macintosh at its shareholder meeting, in front an audience so packed that large numbers of people who owned Apple stock couldn’t get in at all.

Here’s a video of the entire event, complete with an introduction by then-CEO John Sculley apologizing to the shareholders who were stuck outside:

[youtube=http://www.youtube.com/watch?v=YShLWK9n2Sk]

Drawing heavily on inspiration from Xerox’s PARC lab and other research that came before it, as well as Apple’s own Lisa — but adding plenty of its own innovations — the Mac was the first successful computer with a graphical user interface, a mouse and the ability to show you what a printed document would look like before you printed it. As the computer turns 30, it’s tempting to celebrate simply by remembering how profoundly its debut changed personal computing.

(PHOTOS: Macintosh at 30: Apple’s Computer Evolution)

But as I think about the anniversary, I’m at least as impressed by two other facts about the Mac:

1) It’s actually existed for 30 years

2) More important, it’s mattered for 30 years

In other categories of products, something being around for decades, continuing to evolve and maintaining its popularity isn’t all that unusual: Consider, for instance, the Toyota Corolla, which has been with us since 1966.

But the Mac is the only personal computer with a 30-year history. Other than Apple itself, the leading computer companies of 1984 included names such as Atari, Commodore, Compaq, Kaypro and Radio Shack — all of which have since either left the PC business or vanished altogether. Even IBM, personified as the evil Big Brother-like overlord in the Mac’s legendary “1984” commercial, bailed on the PC industry in 2004. That the Mac has not only survived but thrived is astonishing.

Technically, the Macs of today are actually based on operating-system software that originated with the computers made by NeXT, the company Steve Jobs founded after being ousted from Apple in 1985 and then sold to it in 1996. Philosophically, aesthetically and spiritually, though, they’re very much descendants of the original 1984 Mac. The same things Apple cared about then — approachability, integration of software and hardware, a willingness to do fewer things but do them better — it cares about today. It’s always just tried to build the best, most Apple-esque personal computers it could with the technology available to it at the time.

And if you trace the history of the Mac from 1984 to 2014, you keep coming up with ways the platform influenced the rest of the industry — yes, even during the scary period during the mid-1990s when the company flirted with financial disaster.

So for this list, I’m skipping the reasons why the Mac mattered in 1984. Here’s why it’s never stopped being the world’s most influential personal computer.

1. It made icons into art.

The first Mac was the first fully mainstream computer with a graphical user interface, and therefore the first one with icons. They were famously designed by Susan Kare, who later did icons for Microsoft, Facebook and other clients. Today, icons are everywhere — on computers, phones, tablets and the web. And even though today’s designers have more pixels and colors to work with than Kare did back in the day, their work, like hers, involves visualizing concepts in a way that’s immediately understandable, even at a teensy size.

2. Macs have always begged to be networked.

Starting in 1985, when computer networking was still a pricey and exotic rarity, Apple made it easy to connect Macs to each other using a technology called AppleTalk. The original iMac had Ethernet at a time when that was a startlingly advanced feature for a home computer. And when Apple unveiled a laptop with built-in Wi-Fi at Macworld Expo New York in 1999, the notion of being able to use the Internet without any cords was still so startling that Phil Schiller jumped from a great height onto a mattress while clutching an iBook to prove that no strings were attached.

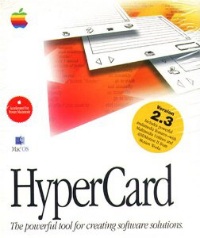

3. HyperCard helped inspire the web.

Bill Atkinson, the genius who did as much as anyone to make the Mac’s interface great, also created 1987’s HyperCard, a Mac application that let anyone create stacks of on-screen cards with text, images and hyperlinks. Widely applauded at the time — and bundled with every Mac — HyperCard never quite changed the world. But it influenced Tim Berners-Lee’s early collaborator Robert Cailliau, who had a hand in inventing the basic technologies of a rather HyperCard-like technology called the World Wide Web.

4. Microsoft Office was born there.

Microsoft Windows and Microsoft Office have had such a symbiotic relationship for so long that it’s easy to forget that Office started out on the Mac. Back in 1989, Microsoft bundled up the first version — with Mac editions of Word, Excel, PowerPoint and an e-mail app — as a limited-time offer. It was a hit, so the bundling became permanent, and a Windows version arrived in 1990.

5. It made pointing portable.

Grizzled tech veterans recall the age when notebook computers didn’t incorporate a pointing device — you either plugged in a mouse, strapped on some sort of ungainly offboard trackball or did without. That changed in 1991 when Apple announced its first PowerBooks, which put a palm-rest area below the keyboard, with a sizable trackball in the middle. Trackballs didn’t last all that long before giving way to touchpads, but the palm rest is still a standard feature on nearly every laptop.

6. QuickTime kickstarted digital video.

If you operate under the theory that Apple didn’t do anything of lasting importance during the 11 years that Steve Jobs was in exile, consider this: QuickTime, which put smooth, high-quality video on a Mac’s screen, was groundbreaking when it debuted in 1991. Its descendants are in every Mac, iPhone and iPad, and the standards it shaped led to the era of YouTube and Netflix.

7. Touchpads took over.

As useful as built-in trackballs were, they had their downsides: They took up a lot of space, required periodic cleaning and were prone to mechanical failure. And their era turned out to be brief. In 1994, Apple shipped the first PowerBooks with touchpads — the company calls them Trackpads — and they soon became the de facto mobile pointing device almost everywhere, with the exception of ThinkPads and a few other machines with tiny pointing sticks.

8. Macs never have trouble saying goodbye.

Part of Apple’s design minimalism involves removing features it’s decided are no longer necessary — and almost always, it errs on the side of removing them too early rather than too late. When 1998’s original iMac ditched the 3 1/2-inch floppy drive — a technology introduced 14 years earlier by the first Mac — it provoked a fair amount of anguish and even conspiracy theories. But within half a decade or so, the floppy was gone everywhere.

9. For logos, it proved upside-down is right.

These days, nearly all laptops have prominent logos on the back of their screens. From the perspective of the users, they’re upside-down — which means that they’re right side-up when you flip the computer open, allowing them to serve as tiny billboards that display a branding message to everyone else around you. But notebooks didn’t always have those logos, and even Apple machines, at first, had them the other way around. In 2012, former Apple employee Joe Moreno explained how the logos got flipped, a design decision that the rest of the industry ended up following.

10. The Apple Store was originally a Mac store.

Apple Store

When the first two Apple Store locations opened on May 19, 2001 in Tysons Corner, Va. and Glendale, Ca., they weren’t stocked with iPhones or iPads. They didn’t even carry iPods, which didn’t exist until October of that year. No, they offered only computers and related products — which meant that Apple’s revolutionary approach to electronics retailing originated as a way to sell more Macs.

11. Steve Jobs’ media hub vision came true.

Back in the early part of this century, when Apple was busy creating apps such as iTunes, iPhoto and iMovie, Steve Jobs spent a lot of time pitching the idea of the Mac as a media hub — a device you’d use to manage digital music, photos, video and other content you created and consumed using a variety of then-new gizmos. The concept worked. And if it’s less of a given today that you’ll use a computer for those tasks, it’s only because the iPhone and iPad proved that phones and tablets can also be great media hubs.

12. It gave Bluetooth a boost.

In 2002, when phones started adding a wireless technology called Bluetooth, there wasn’t much you could do with it. But you could use it to transfer data between your phone and a Mac — at first using Apple’s Bluetooth adapter and, shortly thereafter, via Bluetooth built into new Macs. The technology never became all that common on Windows PCs, but it continues on as a standard Mac feature to this day.

13. Macs keep proving you can start fresh.

In 2001, Apple dumped Mac OS — the original Mac operating system, which had grown outdated and creaky — and replaced it with the state-of-the-art OS X. If the company hadn’t been willing to do that, it’s unlikely that Macs would exist today. Two other similar shifts — the move from 680×0 processors to PowerPC chips, and then the move from PowerPC chips to Intel ones — were equally daring. Strangely, Apple’s fearlessness about such transitions, successful though they’ve been, is one thing about the company that few of its rivals ever imitate.

14. It let you see your keyboard in the dark.

The 17-inch PowerBook that Apple released in 2003 had the largest screen anybody had put into a notebook up until that time — and it did inspire similarly humongous Windows laptops. But I’m bringing it up here because it was the first portable computer with a backlit keyboard and light sensors, which let it turn on the illumination only when necessary. Plenty of other models have since followed its lead, to the point where lack of illumination is a sign that a laptop suffers from excessive cost-cutting.

15. iTunes built commerce into a computing device.

In 2003, Apple started selling digital music downloads. They were primarily meant to wind up on your iPod, but at first you needed a Mac to buy them, since the transaction happened in iTunes, which ran only on a Mac at the time. I include this development here not because of its impact on the music industry — which was epic — but because it introduced the concept of a digital content store being built into a computing device — something which eventually became standard practice everywhere, for music, video, apps, games and books.

16. The iMac defined the modern all-in-one.

In the earliest days of personal computing, there were machines with the screen and electronic guts built into one case, such as Commodore’s PET 2001. Then the design faded away until Apple revived it with the original Mac. Then it faded away again until 1998’s iMac revived it. When Apple released the iMac G5 in 2004 — with a big flat screen built into a slab-like computer on a pedestal — the rest of the industry gradually copied the design. A decade later, if you’re buying a desktop computer, there’s a good chance it’s an iMac or one of its clones.

17. It made solid-state storage make sense.

Since the 1980s — when NEC released an early notebook called the UltraLite — PC makers had tinkered with the idea of replacing rotating storage devices such as hard disks with reliable, fast, compact, power-efficient solid-state memory. But solid-state only became truly mainstream in 2010, when Apple made it a standard feature on the second-generation MacBook Air. It’s still far pricier and more limited in capacity than a hard disk, but it’s now the only form of storage Apple uses for portable Macs — and, at long last, a commonplace technology in other manufacturers’ laptops.

18. Retina is a great leap forward for the eyeballs.

Since the 1980s, computer displays resolutions have been getting higher — usually in baby steps that made new screens a just little bit better than old screens. But when Apple released the first MacBook Pro with a Retina display in 2012, it quadrupled the pixels of its predecessor, among the most impressive one-fell-swoop advances in PC history.

19. Where would computer design be without it?

Virtually every computer that runs Windows owes something to the Mac, but in some cases — as with certain models in HP’s appropriately-named Envy line — the industrial-design debt is so absolute that it’s embarrassing. A goodly percentage of Ultrabooks — and some Chromebooks — also knock off Apple designs to a degree that, frankly, seems wholly unnecessary.

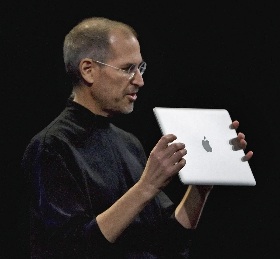

20. No Macs, no iPhones or iPads.

If Apple hadn’t made Macs, it wouldn’t have had a fraction of the industrial-design chops it needed to pull off the iPhone and iPad. And it wouldn’t have had the necessary software, either, since iOS is based on the Mac’s OS X.

In the “1984” commercial that introduced the Mac, Apple suggested that a world without its new machine would be grim and dystopian. That was a fantasy designed to sell computers, not a statement of fact. But have the last 30 years of life on Planet Earth been meaningfully better because that first Mac — and all the ones that have followed — existed? You bet — and I hope that there’s lots more to come.